According to the latest disclosures from The Information, Apple—a corporation renowned for its sequestered security and stringent oversight—is currently ensnared in a profound tactical dilemma. The firm must reconcile its absolute dominion over system code and the App Store ecosystem with the burgeoning necessity to integrate autonomous AI services, such as OpenClaw. Should Apple persist in its categorical prohibition of such automated capabilities, the iPhone risks being relegated to a secondary role in the prevailing “AI Agent” conflict. Intelligence suggests that Apple’s engineering cohorts are surreptitiously architecting a novel regulatory framework, striving to delineate a frontier between the preservation of user privacy and the liberation of AI agent autonomy.

The most formidable rampart within the extant App Store policy is the prohibition of applications possessing the capacity for self-modifying code or the execution of unauthorized cross-app maneuvers. This restriction is precisely why “vibe coding” instruments and automated development utilities have historically struggled to secure approval:

- Revenue and Security Imperatives: If users were empowered to generate applications or fulfill complex tasks via AI agents, Apple would not only forfeit its lucrative commission revenue but also expose the platform to vulnerabilities, potentially transforming the ecosystem into a fertile ground for malware dissemination.

- The Peril of Autonomy: Agentic AI is characterized by its proactive control; however, as evidenced by extreme cases within the OpenClaw user base, such agents may inadvertently cause catastrophic disruptions—such as the wholesale eradication of critical professional correspondence while attempting a routine inbox consolidation.

Reports indicate that Apple is designing a system intended to enforce its core privacy and security benchmarks while permitting AI agents to operate within a “controlled” environment. The primary objective is to preclude “freewheeling” or unpredictable behaviors. This likely entails the introduction of a proprietary API or an elevated permission tier for AI agents, mandating more rigorous sandbox verification and procedural confirmations when accessing sensitive telemetry, such as calendars or payment systems, rather than granting unfettered administrative authority.

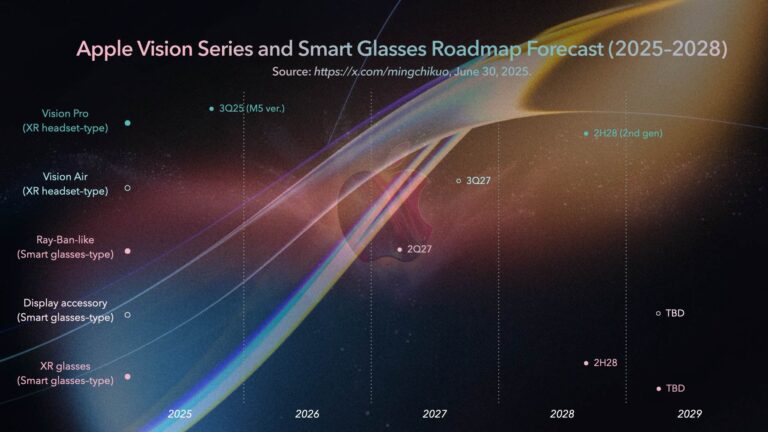

Apple’s purported movements betray a burgeoning anxiety as the tech titan endeavors to bridge the gap in a field where it is perceived to have arrived late. While WWDC 2026 is expected to unveil a profound integration of Siri with generative AI, the decisive battleground remains the “third-party ecosystem.” Should Apple reserve agentic capabilities exclusively for its proprietary services, it would inevitably invite allegations of monopolistic behavior and stifle innovation; conversely, total liberalization risks the collapse of system integrity.

For Apple, this represents a high-stakes gamble predicated on “trust.” Its competitive advantage lies in the formidable on-device AI processing power of Apple Silicon, which ensures that agentic tasks are executed locally, thereby safeguarding data from the cloud. If Apple successfully institutes a “verifiable AI agent protocol,” the App Store may evolve from a mere software repository into a “task-execution nexus” populated by intelligent avatars. The forthcoming WWDC keynote will likely reveal how Apple intends to redefine the rules of engagement in this “post-app” era.

Support Our Threat Intelligence

If you find our technology report and cybersecurity news helpful, consider supporting our work.

Buy Me a Coffee

Buy Me a Coffee